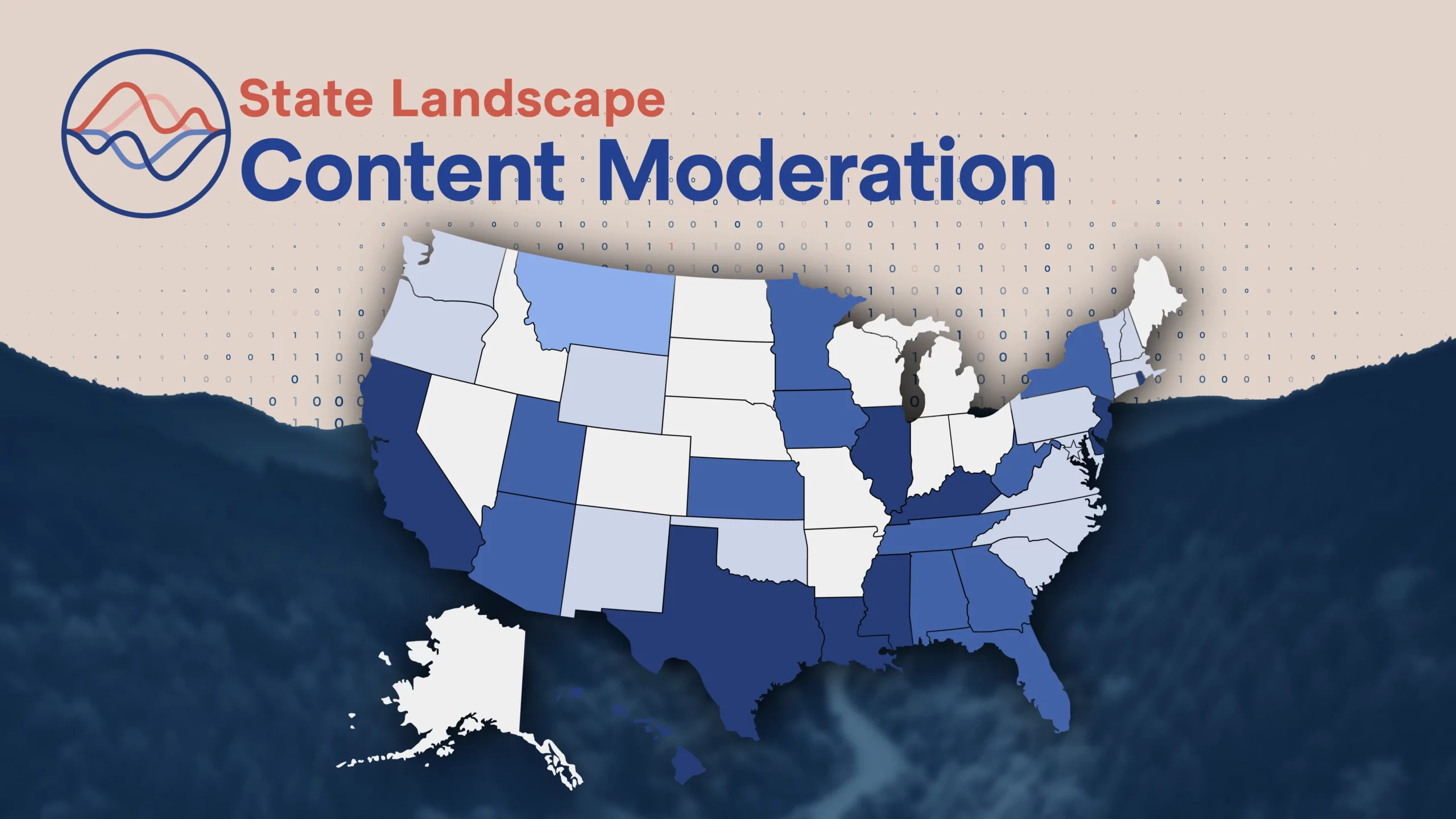

Content Moderation: How did State Policymakers Legislate on Internet Policy This Year?

The 2023 state legislative sessions continued to reflect an upward trend noted in 2022 – a rise in bills aimed at regulating online businesses. In 2023 alone, the first year of the biennium for many state legislatures, there were more than 200 pieces of legislation that focused on regulating online content. These proposals came from both sides of the aisle, with states like Arizona, California, Georgia, Kansas, Minnesota, Montana, New York, and Tennessee, among others, all considering or enacting legislation regulating online services and third-party content. Many of these bills raise constitutional concerns, conflict with federal law including Section 230, and would place major barriers on digital services’ abilities to restrict dangerous content on their platforms.

Regulating the Use of Algorithmically Informed Decision-Making

Unique to 2023 was the widespread launch of new artificial intelligence products, specifically, large language models like ChatGPT. This coincided with a push by state lawmakers to introduce proposals regulating the use of algorithmically informed decision-making, including proposals that could impact how digital services moderate online content. Though most of the proposals were focused on studying these technologies or creating oversight bodies to manage how AI is used, some went a step further in requiring specific algorithmic content moderation or creating a comprehensive AI regulatory framework. However, as many online businesses continue to use algorithmically informed decision-making to make content moderation decisions, it has become an increasingly important question as to how, or whether, states can regulate social media and what is considered free speech online.

“Censorship” and Forced Speech on Private Online Platforms

2023 also marked a shift in how state lawmakers approach proposals focused on content moderation in comparison to recent legislative sessions. This was the first legislative session in which pending litigation focusing on whether states can dictate how online businesses moderate content and speech on their platforms has resulted in several important decisions – notably, the Supreme Court recently granted certiorari to decide the ultimate outcome of NetChoice & CCIA v. Paxton and NetChoice & CCIA v. Moody. These landmark cases are likely to impact how user-generated content is treated moving forward. It is plausible that some states were hesitant to advance similar proposals that limited “censorship” on platforms. States like Arizona and Montana advanced proposals similar to the 2021 Texas and Florida laws but none were officially signed into law in 2023. Many legislators made the measured decision to pause the advancement of this type of policy until the Supreme Court weighs in.

Another noteworthy proposal introduced in February 2023, California AB 886, would establish a “link tax”, under the false premise that digital services somehow “siphon” revenue away from news sites by linking to and providing a preview of the content on external websites. Notably, because of AB 886’s “non-retaliation clause”, this proposal would force online businesses to link content against their will. This clause would prohibit a covered platform from refusing to index content or changing the ranking, identification, modification, branding, or placement of the content of an eligible digital journalism provider. Additionally, under this forced speech requirement, poor-quality and clickbait journalism would be incentivized. This provision clearly violates the First Amendment by forcing websites to link to content against their discretion. Similar proposals have been considered or passed on the federal level as well as in Canada, New Zealand, the European Union, and Australia. California legislators have paused this effort until at least 2024 due to many of the U.S. Constitutional concerns and looming issues with implementation in other countries. In Canada, some platforms have either chosen to stop allowing linking to Canadian news or are strongly considering doing so absent significant changes to the law rather than face limitless liability and the uncertainty associated with the mandatory bargaining process.

Roundup of 2023 and What to Expect in 2024

Though children’s online safety and comprehensive data privacy may have taken up a significant amount of the oxygen in the state tech policy space in 2023, content moderation proposals were still widely considered by both Democrat and Republican legislators. With many lawsuits making their way through the courts, including NetChoice v. Bonta, TikTok & Alario, et al. v. Knudsen, NetChoice & CCIA v. Paxton, and NetChoice & CCIA v. Moody, lawmakers may have to adjust their approach to regulating online businesses in 2024, specifically when it comes to intermediary liability and user-generated content on private online platforms. If 2023 is any indication, it is likely that legislators will continue to be interested in technology policy in 2024 and may continue to introduce policies that have broad impacts on consumers and businesses alike. While recognizing that policymakers are appropriately interested in the digital services that make a growing contribution to the U.S. economy, it is imperative that proposals consider the broader legal and constitutional context and include stakeholder input.

See here for CCIA’s 2023 Content Moderation State Legislative Landscape.