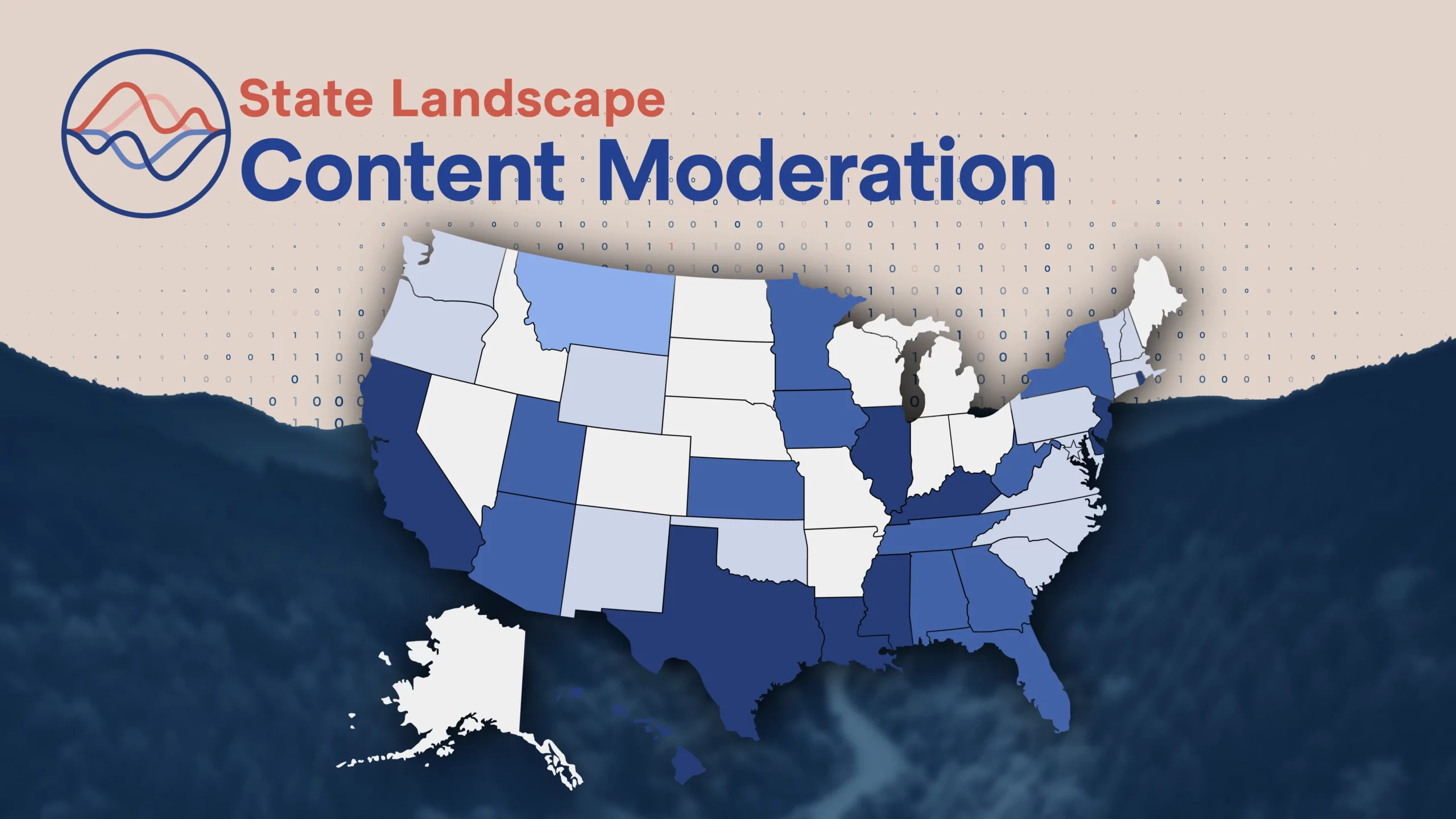

The High Cost of State-by-State Regulation of Internet Content Moderation

Content moderation bills continue to pop up in state legislatures, and one in Utah currently sits on the governor’s desk awaiting his veto or signature. While the bills vary in their content, most impose prescriptive state-by-state regulations governing internet content moderation practices. DisCo has previously covered the constitutional and legal problems with state content moderation bills. This post answers the question most relevant to taxpayers and internet users: How much would state content moderation bills cost?

To estimate the incremental costs of state content moderation bills, we need to establish a relevant cost baseline. At present, each internet platform can choose to moderate user-generated content using a variety of approaches, depending on the nature of the platform and the desired user experience. A common approach is to utilize automated content moderation to perform triage on specific categories of prohibited content that can be categorized easily, such as where databases already exist, with human moderators primarily used to judge a small fraction of hard-to-categorize content. The use of automation as a primary tool in content moderation is common because of cost-efficiency—for example, Microsoft and Alibaba both offer third-party automated content moderation services for about $0.40 per 1,000 images or posts at scale. This implies an automated content review cost of about $0.0004 per post, which we will use as our baseline. We assume that under the state content moderation bills, these same automated content moderation costs would continue, with human moderator review added as needed to comply with state bill requirements.

Some of the state content moderation bills (including Utah’s S.B. 228) require that the platform send a detailed written notice to the user or the state government every time a post is moderated. Depending on the level of detail required to comply with the written notice requirement in each bill, this could require the involvement of a human moderator. Journalists covering content moderation at online platforms have found that at even the most efficient platforms, it takes an average of about 150 seconds for a human moderator to review a post. This work requires skill and judgment; moderators often must quickly make complex decisions with limited context. Even assuming services could retain personnel to do this challenging work at $15/hour, the costs associated with this requirement would be at least $0.625 per moderated post. For each post requiring human moderation that could have been automated in the absence of a state content moderation bill, the moderation costs more than 1,500 times as much as the baseline.

Some state content moderation bills (including Utah’s S.B. 228) also mandate that users be able to appeal moderation decisions, have their appeals be reviewed by a human moderator, and even have the human moderator explain the moderation decision to the user. Depending on the nature of the appeal and the specific requirements of the bill, that could take anywhere from 5 minutes to half an hour of a human moderator’s time, which even at $15/hour would cost between $1.25 to $7.50. For each appeal that could have been automated in the absence of a state content moderation bill, the appeal costs 3,000 to 18,000 times as much as the baseline.

Not every moderated post is appealed, but with appeal rates of one in forty to one in ten moderated posts on some popular platforms in recent years, the costs would add up. With initial content moderation rates ranging between one per 20 users annually on some platforms to almost twice per user annually on others, we can establish a plausible range of incremental compliance costs for a hypothetical startup with 20 million users.

- In the low case, where one in 20 users has a post moderated, one in forty moderations are appealed, and each appeal takes about 5 minutes, that adds up to about $0.66 million in aggregate annually, or $0.033 per user annually:

- Initial Content Moderation Costs Annually = 20 million users * 1 moderated post/20 users * $0.625 cost/moderated post = $625,000 annually

- Appeal Costs Annually = 20 million users * 1 moderated post/20 users * 1 appeal/40 moderated posts * $1.25/appeal = $31,250 annually

- In the high case, where two posts per user are moderated, one in ten moderations are appealed, and each appeal takes about half an hour, that adds up to $55 million in aggregate annually, or $2.75 per user annually:

- Initial Content Moderation Costs Annually = 20 million users * 2 moderated posts/user * $0.625 cost/moderated post = $25,000,000 annually

- Appeal Costs Annually = 20 million users * 2 moderated posts/user * 1 appeal/10 moderated posts * $7.50 cost/appeal = $30,000,000 annually

For a startup, even the low case total compliance cost of about $0.66 million annually could be ruinous, as startups typically have limited initial capital. The high case total cost of $2.75 per user annually would be an enormous financial hit for platforms of all sizes, as even leading platforms only have global average revenue per user of about $10 annually. As a result, state content moderation bills could unwittingly create a significant barrier to entry, discouraging tech startups from operating in their states, reducing competition, inhibiting job creation from startups, and curtailing the range of online services available to residents of those states.

In addition, some of the state content moderation bills (such as Oklahoma’s S.B. 383) create legal risks for online platforms, either from enforcement actions by state governments for purported failures to follow costly state-specific content moderation rules, or from private rights of action arising from the same. Even in clear-cut cases with facts in the platform’s favor, such litigation could cost $80,000 through a Motion to Dismiss or $150,000 through a Motion for Summary Judgment. These costs would stack on top of the compliance costs. Startups with limited initial capital are reluctant to operate in states with elevated risk of legal costs, and may not only avoid locating offices and jobs in such states but also disallow residents of such states from using their platforms in order to de-risk.

In addition to deleterious impacts to platforms, consumers, and competition, state content moderation bills would burden taxpayers with administrative costs, enforcement costs, and possible litigation damages. Administrative costs for most state content moderation bills would likely be in excess of $90,000 annually for each such state, enforcement costs may be even higher for each state, and given the constitutional concerns around such bills and potential legal challenges, each such state could easily find itself paying damages and fees.